Hey everyone, it’s Ilya again; if you remember me from last summer, I’m the octopus guy; otherwise, don’t worry, I’ll introduce myself again. I’m now a third year at UC Berkeley, studying Electrical Engineering and Computer Science, and this summer I’m tackling the problem of making a fun brain-themed neurorobot! (For more information on this project, check out our collaborator Chris Harris’s blog post here.)

Hey everyone, it’s Ilya again; if you remember me from last summer, I’m the octopus guy; otherwise, don’t worry, I’ll introduce myself again. I’m now a third year at UC Berkeley, studying Electrical Engineering and Computer Science, and this summer I’m tackling the problem of making a fun brain-themed neurorobot! (For more information on this project, check out our collaborator Chris Harris’s blog post here.)

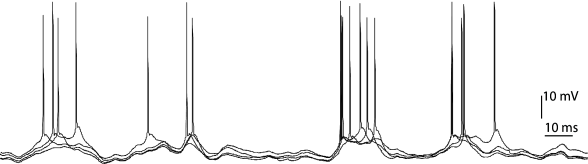

I’m specifically focusing on making the neurorobot see like we do; recognizing colors, shapes, and even complex objects in its surroundings. Small problem, however; while a human’s brain talks like this, using constantly evolving neural spike trains:

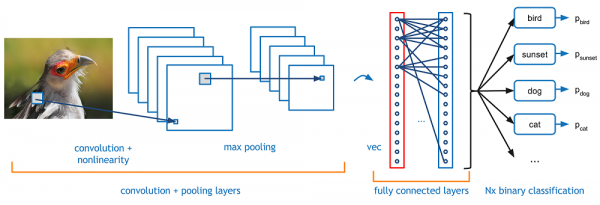

Our computer talks a little bit more like this, with a digital pre-trained neural network:

So, unfortunately, a computer is a little bit harder to teach shapes to than a little kid, since it’s lacking all those beautiful neural pathways that nature has been working on for millions of years. Instead, we have to build those pathways ourselves. I feel a little like I’m playing a digital Frankenstein, trying to give my creation a brain.

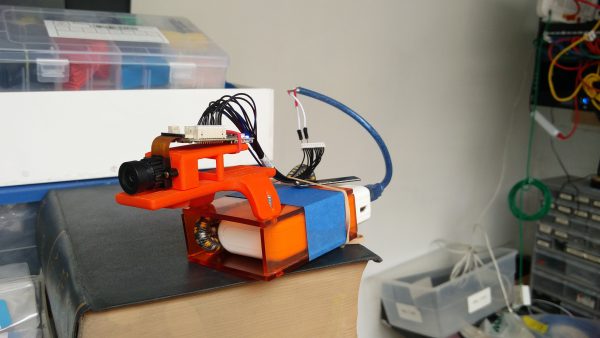

Speaking of my creation, this is my handheld video retrieval unit, a wireless IP camera. It’s a stand-in for the final robot just so I have data to work with, and its name is Weird Duck:

So far I’m still working out the first basic problems of image recognition and localization (for the more tech minded I’m working with fast regional convolutional neural networks as seen in https://docs.microsoft.com/en-us/cognitive-toolkit/object-detection-using-faster-r-cnn). I hope to be able to post some cool updates soon, but in the meantime, I highly suggest checking out this video of me tracking a glue-stick using analysis of the probability distribution function of orange color in the live video:

Image Sources:

- https://neuronaldynamics.epfl.ch/online/Ch7.S1.html

- https://adeshpande3.github.io/A-Beginner%27s-Guide-To-Understanding-Convolutional-Neural-Networks/