-

EducationWith our impending (PAID!) Summer Research Experience for Teachers (RET), based on our previous successful Summer Research Fellowships, we wanted to highlight the successes of our pilot teacher for this upcoming program. Meet Jessica S., Neuroscientist, Plant Scientist, and Pea-Pod Costume Designer Extraordinaire! Jess participated in the Summer of 201’s undergraduate research fellowship as our first teacher […]

EducationWith our impending (PAID!) Summer Research Experience for Teachers (RET), based on our previous successful Summer Research Fellowships, we wanted to highlight the successes of our pilot teacher for this upcoming program. Meet Jessica S., Neuroscientist, Plant Scientist, and Pea-Pod Costume Designer Extraordinaire! Jess participated in the Summer of 201’s undergraduate research fellowship as our first teacher […] -

BizNeuroscience Experiment Intern Wanted (Downtown Ann Arbor) Compensation: $15/hr based on 40-hour work weekEmployment type: full-time internship Backyard Brains is seeking a neuroscience intern to continue ongoing experiments! You:-A STEM undergrad, currently taking a break from the classroom. (Recent grad or gap year preferable)-Passionate about scientific discovery and designing innovative, impactful experiments-Experienced in or excited by a future […]

BizNeuroscience Experiment Intern Wanted (Downtown Ann Arbor) Compensation: $15/hr based on 40-hour work weekEmployment type: full-time internship Backyard Brains is seeking a neuroscience intern to continue ongoing experiments! You:-A STEM undergrad, currently taking a break from the classroom. (Recent grad or gap year preferable)-Passionate about scientific discovery and designing innovative, impactful experiments-Experienced in or excited by a future […] -

EducationHey everyone! I’m Pablo, a junior from Nido de Aguilas High School in Santiago, Chile. In my free time, I like to doodle and run. My project is a multi-channel version of the experiment that my colleague and friend Cristian developed: it consists of using the SpikerShield Pro’s ability to get data from multiple channels […]

EducationHey everyone! I’m Pablo, a junior from Nido de Aguilas High School in Santiago, Chile. In my free time, I like to doodle and run. My project is a multi-channel version of the experiment that my colleague and friend Cristian developed: it consists of using the SpikerShield Pro’s ability to get data from multiple channels […] -

EducationHi Everyone. Juan here! My two month tour with Backyard Brains has reached its end, and I’m really grateful to have had the opportunity to work on this project. I had three activites during the “practica” here at Backyard Brains: Recording from the Ganglia of Snails Helping on the Anemone Project Assisting in Outreach. The snail recording was my […]

EducationHi Everyone. Juan here! My two month tour with Backyard Brains has reached its end, and I’m really grateful to have had the opportunity to work on this project. I had three activites during the “practica” here at Backyard Brains: Recording from the Ganglia of Snails Helping on the Anemone Project Assisting in Outreach. The snail recording was my […] -

EducationHi! Juan Ferrada here from the University of Santiago again to give you an update on my project with Backyard Brains. Main Project – Single unit recording from Snail Neurons First mission – Isolate the Neurons As we spoke of a month ago, we are trying to record the individual neurons of the giant pacemaker cells of […]

EducationHi! Juan Ferrada here from the University of Santiago again to give you an update on my project with Backyard Brains. Main Project – Single unit recording from Snail Neurons First mission – Isolate the Neurons As we spoke of a month ago, we are trying to record the individual neurons of the giant pacemaker cells of […] -

FellowshipCall for Undergraduates in Biology or Engineering Fields: Are you a neuroscience nerd? Do you want to learn how the brains of animals like squids or dragonflies work? Is your background in Electrical, Mechanical or Computer Engineering? Want to develop your own innovative experiments and publish your results? Learn to communicate those stunning results with the […]

FellowshipCall for Undergraduates in Biology or Engineering Fields: Are you a neuroscience nerd? Do you want to learn how the brains of animals like squids or dragonflies work? Is your background in Electrical, Mechanical or Computer Engineering? Want to develop your own innovative experiments and publish your results? Learn to communicate those stunning results with the […] -

EducationOver 11 sunny Ann Arbor weeks, our research fellows worked hard to answer their research questions. They developed novel methodologies, programmed complex computer vision and data processing systems, and compiled their experimental data for poster, and perhaps even journal, publication. But, alas and alack… all good things must come to an end. Fortunately, in research, […]

EducationOver 11 sunny Ann Arbor weeks, our research fellows worked hard to answer their research questions. They developed novel methodologies, programmed complex computer vision and data processing systems, and compiled their experimental data for poster, and perhaps even journal, publication. But, alas and alack… all good things must come to an end. Fortunately, in research, […] -

FellowshipSo memory hacking during sleep is a thing? With endless runs back and forth to Om of Medicine chasing down my subjects, to countless hours staring at the Mona Lisa of sleep: Delta waves, and many other ups and downs during this summer… I can finally tell you it is quite possible!! As August is here, […]

FellowshipSo memory hacking during sleep is a thing? With endless runs back and forth to Om of Medicine chasing down my subjects, to countless hours staring at the Mona Lisa of sleep: Delta waves, and many other ups and downs during this summer… I can finally tell you it is quite possible!! As August is here, […] -

EducationHey everyone! My summer of research in Ann Arbor has come to an end and it’s been an awesome experience. It’s been a busy 10 weeks of making daily improvements to my rig, resoldering the flyPAD, collecting data, and presenting what I found to others. The original goal of this project was to see if […]

EducationHey everyone! My summer of research in Ann Arbor has come to an end and it’s been an awesome experience. It’s been a busy 10 weeks of making daily improvements to my rig, resoldering the flyPAD, collecting data, and presenting what I found to others. The original goal of this project was to see if […] -

EducationWow, what a summer!!! I have some exciting news to report…I didn’t get bit by ONE mosquito all summer!!! Just kidding, my project is a little more exciting than that! I did it! I successfully put together and executed a project that I was a little iffy about back in May, and developed a new-found […]

EducationWow, what a summer!!! I have some exciting news to report…I didn’t get bit by ONE mosquito all summer!!! Just kidding, my project is a little more exciting than that! I did it! I successfully put together and executed a project that I was a little iffy about back in May, and developed a new-found […] -

EducationHi everyone! The summer is finally coming to a close and I am excited to share all that I have learned from my time as a Backyard Brains fellow with you. If you’ve been here since the beginning, thank you so much for following along with me and my squiddos for the past ten weeks […]

EducationHi everyone! The summer is finally coming to a close and I am excited to share all that I have learned from my time as a Backyard Brains fellow with you. If you’ve been here since the beginning, thank you so much for following along with me and my squiddos for the past ten weeks […] -

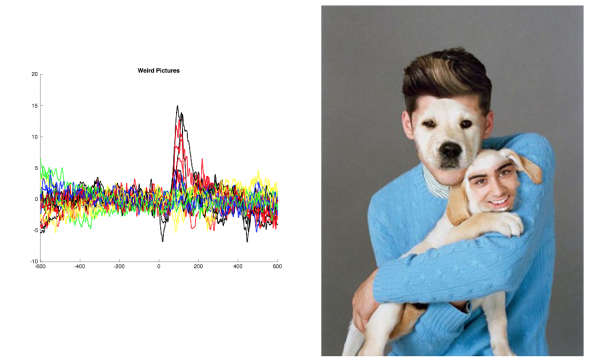

EducationG’day again! I’ve got data… and it is beautiful! More on this below… I am pleased to update my progress on my BYB project, Human EEG visual decoding! If you missed it, here’s the post where I introduced my project! Since my first blog post, I have collected the data from 6 subjects with the stimulus presentation program […]

EducationG’day again! I’ve got data… and it is beautiful! More on this below… I am pleased to update my progress on my BYB project, Human EEG visual decoding! If you missed it, here’s the post where I introduced my project! Since my first blog post, I have collected the data from 6 subjects with the stimulus presentation program […]