-

BizAs part of our operations, we give talks to the public on weekly basis. We begin with neural signals from the legs of cockroaches, display of which carries low risk as the legs can grow back and cockroach leg neural signals are not covered under United States Privacy laws. But, as we have been expanding our […]

BizAs part of our operations, we give talks to the public on weekly basis. We begin with neural signals from the legs of cockroaches, display of which carries low risk as the legs can grow back and cockroach leg neural signals are not covered under United States Privacy laws. But, as we have been expanding our […] -

EducationWelcome! This is Kylie Smith, a Michigan State University undergraduate writing to you from a basement in Ann Arbor. I am studying behavioral neuroscience and cognition at MSU and have been fortunate enough to have landed an internship with the one and only Backyard Brains for the summer. I am working on The Consciousness Detector […]

EducationWelcome! This is Kylie Smith, a Michigan State University undergraduate writing to you from a basement in Ann Arbor. I am studying behavioral neuroscience and cognition at MSU and have been fortunate enough to have landed an internship with the one and only Backyard Brains for the summer. I am working on The Consciousness Detector […] -

EducationMeet the EMG Reaction Timer! The EMG Reaction Timer will settle once and for all who has the fastest draw in the west… or you can use it to perform neuroscience experiments, in the home or classroom, exploring how we respond to different kinds of stimuli! The Reaction Timer works with our EMG SpikerBox and Spike […]

EducationMeet the EMG Reaction Timer! The EMG Reaction Timer will settle once and for all who has the fastest draw in the west… or you can use it to perform neuroscience experiments, in the home or classroom, exploring how we respond to different kinds of stimuli! The Reaction Timer works with our EMG SpikerBox and Spike […] -

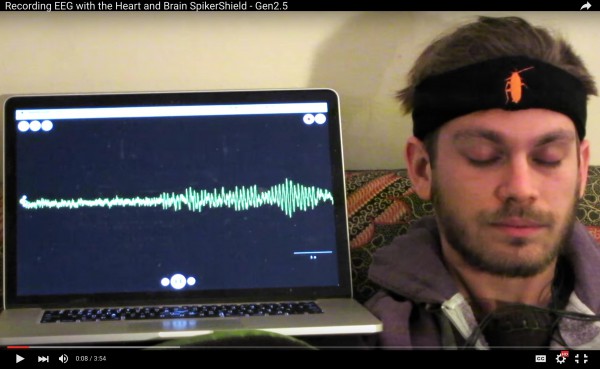

SoftwareWe have had our own custom Android and iPhone apps for quite some time now, but if wanted to use a PC or laptop, we’d turn to Audacity, the free open-source audio processing program. It works well but is not made for neural recording. Hence, today, we announce the beta release of our first custom […]

-

SoftwareWhile most of us were enjoying our relaxing summer vacations, our developer Nate was hard at work porting our Backyard Brains mobile application to the Android platform. We have just released our first version to the Android Market, and Yes! it’s a free download. We are happy to now add the android phones to our […]

SoftwareWhile most of us were enjoying our relaxing summer vacations, our developer Nate was hard at work porting our Backyard Brains mobile application to the Android platform. We have just released our first version to the Android Market, and Yes! it’s a free download. We are happy to now add the android phones to our […] -

SoftwareByB has had magnificent success using Audacity to view and record their neural data, and Tim has begun thinking about modifying Audacity to contain a digital oscilloscope mode. Here is what he wrote to the Audacity team: Hi folks, I just sent an e-mail regarding getting Audacity to work on the OLPC (one labtop per child) project, […]

-

OLPCBackyard Brains is beginning to make in-roads with the OLPC (One Laptop Per Child) initiative. This week we were able to display spike waveforms from a cockroach leg in real-time on the OLPC laptop. We are using the “Measure” application to display the data. Note the spikes on the XO PC below from our prototype […]