Hello again everyone! It’s Yifan here with the songbird project. Like my other colleagues I also attended the 4th of July parade in Ann Arbor, which was very fun. I made a very rugged cardinal helmet which looks like a rooster hat, but I guess rooster also counts as a kind of bird, so that turned out just fine.

Hello again everyone! It’s Yifan here with the songbird project. Like my other colleagues I also attended the 4th of July parade in Ann Arbor, which was very fun. I made a very rugged cardinal helmet which looks like a rooster hat, but I guess rooster also counts as a kind of bird, so that turned out just fine.

Anyways, since the last blog post, I have shifted my work emphasis to user interface. After some discussions with my supervisors, we’ve made the decision to change the scheme a little. Instead of using machine learning to detect onsets in a recording, we are going to make an interface that allows the users to select an appropriate volume threshold to do the pre-processing. Then, we will use our machine learning classifier to further classify these interesting clips in details.

Why thresholding based on volume, one might ask? Well, volume is the most straightforward property of sound for us. During tech trek, a kid asked me a very interesting question: when you are detecting birds in a long recording, how do you know the train sound you ruled out as noise isn’t a bird that just sounds like train? Although this one should be quite obvious, we should still give the users the freedom to keep what they want in the raw data. Hence, I’ve developed a simple mechanism that allows every user to decide what they want and what they don’t want before classifying.

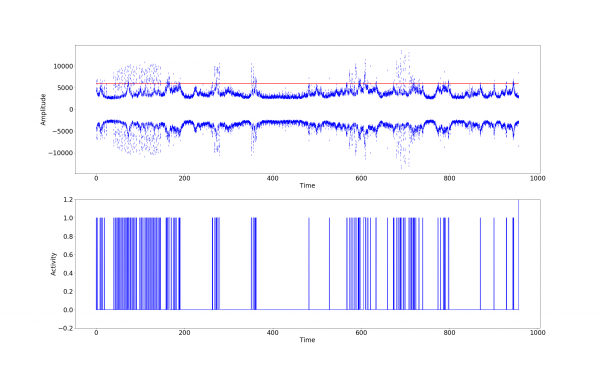

This figure is a quick visual representation of a 15 minute field recording after being processed by the mechanism I was talking about. As you can see, in the first plot there is a red line. That is the threshold for user to define. Anything louder than this line would be marked as “activity”; anything quieter than it would be marked as “inactivity.” The second plot shows the activity by time. However, an activity, like a bird call, might have long silence period in between each call. In order not to count those as multiple activities, we have a parameter called “inactivity window,” which is basically the silent time you need in between two activities to be counted as separate activities.

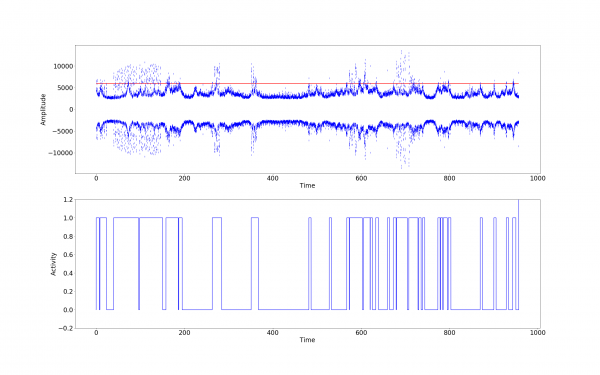

In the above figure, the inactivity window is set to 0.5 second, which is very small. That is why you can see so many separate spikes in the activity plot. Below is the the plot of the same data, but with a inactivity window of 5 seconds.

Because the inactivity window is larger now, smaller activities are now merged into longer continuous activities. This can also be customized by users. After this preprocessing procedure, we will chop up the long recording based on activities, and run smaller clips through the pre-trained classifier.

Unfortunately my laptop completely gave up on me a couple days ago, and I had to send it to repair. I would love to show more data and graphs in this blog post, but I’m afraid I have to postpone that to my last post. Anyways, I wish the best for my laptop (as well as the data in it), and see you next time!