***Written by Nour Chahine***

Can you use a brain-computer interface to perform magic tricks? Guess what card someone is thinking of?

That is exactly what my TinyML project, Pick a Card, is all about.

I will develop a small screen to show a set of playing cards while measuring EEGs. The subject chooses a card, and an AI algorithm would determine which one it was.

But before I get into the specifics, let me tell you how this project came about.

Ever thought of how our brain automatically registers our surrounding environment or recognizes someone’s voice over the phone? The human brain is capable of processing up to one million bits per second of sensory information, and yet it bears no cognitive load on our shoulders. Even though we do not consciously notice these processes taking place, our brains are quite loud about it!

Electroencephalography or EEG is a technique that allows us to measure our brain’s electrical activity noninvasively and is one of the most widely used techniques for “reading the brain.” Electrodes are placed in specific locations on a subject’s scalp and are used to measure the electrical activity of a targeted brain region, or the general activity of the entire cortex.

The challenge that arises with EEGs is that it can be difficult to discern specific patterns of activity, especially since the brain is always multitasking and each neuron is up to something different! A good EEG signal would be detected when multiple neurons within a network activate in a synchronous fashion, as their electrical activities would accumulate into one big signal that can be detected on the surface of the scalp. Sensory processes tend to produce such synchronous activities, so the EEG is a great technique for studying sensory processing.

Theoretically, if we are able to decode the brain’s electrical activity generated in response to sensory information, we can deduce some valuable characteristics related to that information. In fact, this is what neuroscientists and biomedical engineers have been doing for quite a while in a wide range of applications, such as enabling individuals with disabilities that hinder their movement or communication to use brain-computer interfaces to spell out what they want to say!

So what does this have to do with my card-picking project?

If an algorithm can decode which card you picked from your brain’s electrical activity, it will be able to decode other information too!

So I will be training a machine learning algorithm to recognize the steady state visual evoked potentials (SSVEP) and/or the P300 surprise signal to give a guess on the run.

Just what are SSVEPs and P300 signals?

SSVEP Signal

The SSVEP (Steady State Visually Evoked Potential) is a brain signal that arises when the retina is excited with a visual stimulus that flickers at a certain frequency f, usually ranging between 3.5 Hz and 75 Hz. The SSVEP has a frequency equal to f and/or multiples of f in this case. How is this useful?

Suppose we are showing four cards on the screen, and each of these cards is flickering at a particular frequency.

By analyzing the SSVEP signal that arises when the subject is focusing at one of the cards, we can determine which card the subject was focusing on, because the SSVEP will have the same or multiples of the flickering frequency of the card.

P300 Signal

The P300 signal is a positive deflection that arises around 300 milliseconds after a subject is exposed to a series of stimuli (they could be auditory, visual, somatosensory, etc.), such that one is more infrequent than the others. The subject is told to focus on the less frequent stimulus, and when this stimulus is presented, the P300 signal arises.

So how does this help my project?

Suppose we are showing four cards on the screen, but this time, only one card is emphasized at a time.

We tell the subject to pick a card and focus on this card. Then we emphasize/flash the cards on the screen one at a time, at random. The P300 signal arises when the subject’s chosen card is flashed or emphasized.

For my project, I will be implementing both SSVEPs and P300s in the card detection process and comparing the methods to see which one delivers better results.

What have I done so far?

For the Graphical User Interface (screen display), I have so far been using the Wio Terminal. I programmed GUIs for both the SSVEP and the P300 experiments.

I am currently at the stage of collecting preliminary data for the SSVEP component of my project. Due to a time delay imposed by the Wio Terminal’s image display capabilities, I have been flashing only one of the cards for reliable frequency readings. At a later stage, I will be transferring my setup to a web application to have all cards flashing simultaneously.

During the experiment, electrodes are placed over the occipital lobe region (where the visual cortex is) as this is where SSVEPs are usually most noticeable. My first subject, Ariyana, can be seen below with the BYB electrodes on the back of her head.

Here is a sneak peek of some cool results I have collected so far!

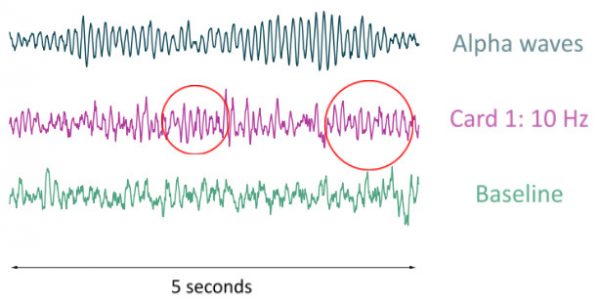

This first figure displays raw EEG data of my colleague Ariyana in three settings: closing her eyes (which would generate a 10 Hz signal known as the alpha wave), looking at a card flashing at 10 Hz, and her baseline activity. Notice the 10 Hz oscillatory pattern in the second plot?

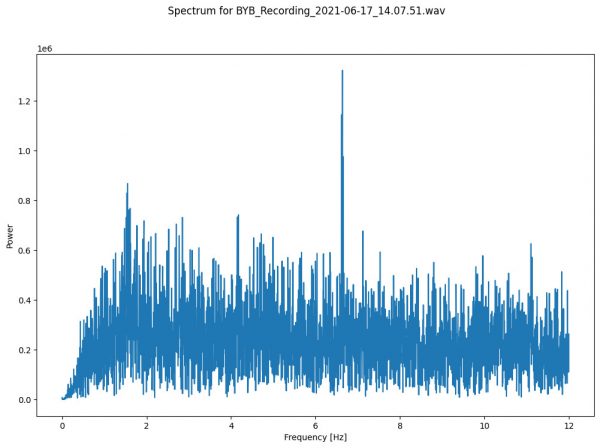

A better way to visualize the frequencies present in a signal is to plot its power spectral density. It describes the power present in the signal as a function of frequency, per unit frequency. The EEG signal from my second subject, Sarah, showed a significant increase in signal power at 6.6 Hz as Sarah was looking at a card flashing at 6.6 Hz!

After I collect enough data from the SSVEP trials, I will run it through a machine learning algorithm and train it to recognize the dominating frequency, which would allow me to determine what card someone is thinking of.

I will be following a similar procedure with the P300 component of my project, and I am very excited to see which method gives me the most reliable results! Which one do you think will win?

About Me

I am a Lebanese electrical and computer engineering student at the American University of Beirut. As a neuroscientist in the making, I am quite excited to take part of the neuro-revolution happening at Backyard Brains over the summer! I am mainly interested in studying the mechanisms of memory consolidation during sleep and developing devices that can help treat dementia-related disorders. Other than scientific inquiry and tinkering, I enjoy everything music-related and spend a good portion of my time singing and dancing.