-

Experiment— Written by Milica Manojlovic — We are all familiar with the Pinocchio story, right? A wooden boy whose nose would grow every time he lied. What if I told you that, with the right equipment, you could feel like Pinocchio in a just few minutes? All you need is a massager (>50 Hz frequency […]

Experiment— Written by Milica Manojlovic — We are all familiar with the Pinocchio story, right? A wooden boy whose nose would grow every time he lied. What if I told you that, with the right equipment, you could feel like Pinocchio in a just few minutes? All you need is a massager (>50 Hz frequency […] -

Fellowship— Written by Summer Eunhyung Ann — I am Summer, a Computer Science and Neuroscience undergraduate at the University of Michigan, a part-time artist, nerd, researcher at Michigan Medicine, and an intern at Backyard Brains for the summer. My plan is to create a do-it-yourself (DIY) eye tracker to investigate the hypothesis that humans, having […]

Fellowship— Written by Summer Eunhyung Ann — I am Summer, a Computer Science and Neuroscience undergraduate at the University of Michigan, a part-time artist, nerd, researcher at Michigan Medicine, and an intern at Backyard Brains for the summer. My plan is to create a do-it-yourself (DIY) eye tracker to investigate the hypothesis that humans, having […] -

Fellowship— Written by Milica Milosevic & Amanda Putti — Considering the slime craze of 2016, we’re pretty sure we have all played with slime – or at least know what it is. Have you ever heard of slime molds though? Slime molds, scientifically referred to as Physarum polycephalum, are a type of single-celled protist that […]

Fellowship— Written by Milica Milosevic & Amanda Putti — Considering the slime craze of 2016, we’re pretty sure we have all played with slime – or at least know what it is. Have you ever heard of slime molds though? Slime molds, scientifically referred to as Physarum polycephalum, are a type of single-celled protist that […] -

Fellowship— Written by Tom DesRosiers, Elsa Fedrigolli & Luka Caric — As the only fully equipped team, our week started off strong with an advantage compared to the others. On the first few days, our brains were fried and it was difficult to get started. But by the end of the first week we were […]

Fellowship— Written by Tom DesRosiers, Elsa Fedrigolli & Luka Caric — As the only fully equipped team, our week started off strong with an advantage compared to the others. On the first few days, our brains were fried and it was difficult to get started. But by the end of the first week we were […] -

Education— Written by Tim Marzullo — Jeopardy is an American institution, and we have fond memories of watching their episodes with our families during our youth. We still watch reruns to relax, and recently something caught our eye. While watching episode 8364, which originally played on March 21, 2021 (Season 37) with guest host Dr. […]

Education— Written by Tim Marzullo — Jeopardy is an American institution, and we have fond memories of watching their episodes with our families during our youth. We still watch reruns to relax, and recently something caught our eye. While watching episode 8364, which originally played on March 21, 2021 (Season 37) with guest host Dr. […] -

FellowshipThe Summer Fellowship of the Brain is back — with a twist! A cohort of seven STEM undergraduates from the US and Serbia will be spending the whole of July in Belgrade, Serbian capital. There, in the Center for Promotion of Science‘s lab makerspace, they’ll conduct their neuroscience research. Best of all, the resulting experiments […]

FellowshipThe Summer Fellowship of the Brain is back — with a twist! A cohort of seven STEM undergraduates from the US and Serbia will be spending the whole of July in Belgrade, Serbian capital. There, in the Center for Promotion of Science‘s lab makerspace, they’ll conduct their neuroscience research. Best of all, the resulting experiments […] -

AI EditedIn a world of secrets, plants are speaking up. And science is all ears! As a recent study from the Cell journal shows, our leafy friends make popping or clicking sounds when under duress – such as when they are thirsty or injured. But how exactly do plants make sounds? A team of scientists from […]

AI EditedIn a world of secrets, plants are speaking up. And science is all ears! As a recent study from the Cell journal shows, our leafy friends make popping or clicking sounds when under duress – such as when they are thirsty or injured. But how exactly do plants make sounds? A team of scientists from […] -

InternshipAre you a teen or young adult (13-30 years old) who identifies as neurodivergent, ADHD, autistic, or having dyslexia — or do you know someone else who is? If yes, the opportunity of spending July and August on a paid virtual internship with NeuroVivid could be something you’re looking for. As a co-designer for their […]

InternshipAre you a teen or young adult (13-30 years old) who identifies as neurodivergent, ADHD, autistic, or having dyslexia — or do you know someone else who is? If yes, the opportunity of spending July and August on a paid virtual internship with NeuroVivid could be something you’re looking for. As a co-designer for their […] -

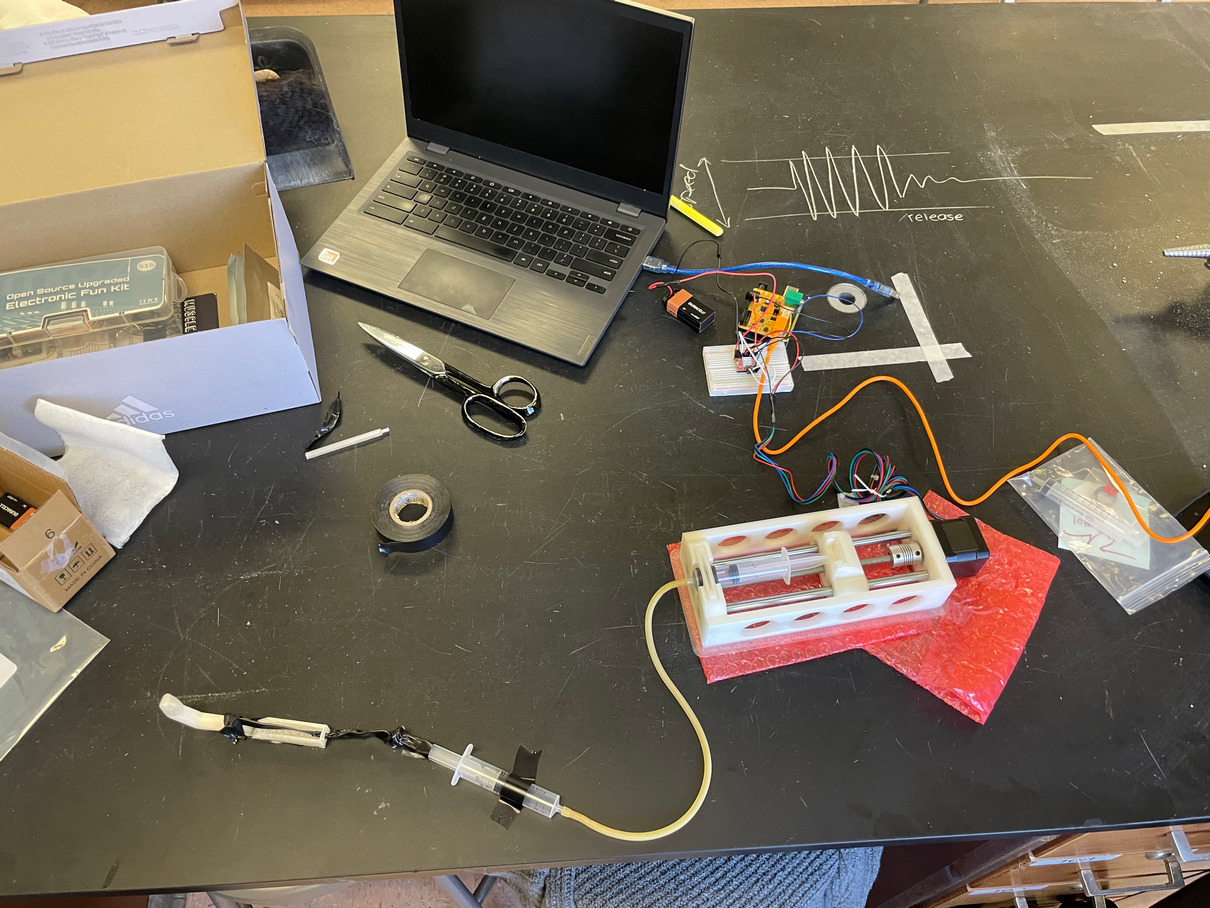

StudentsA pump made of two plastic syringes and a pushing block powered by a stepper motor, one of our Muscle SpikerShields and a 3D-printed base — that’s all that Kiley Branan, a high school senior from Indiana, needed to put together a prototype of a finger that you can open and close by flexing your […]

StudentsA pump made of two plastic syringes and a pushing block powered by a stepper motor, one of our Muscle SpikerShields and a 3D-printed base — that’s all that Kiley Branan, a high school senior from Indiana, needed to put together a prototype of a finger that you can open and close by flexing your […] -

Education— Written by Tim Marzullo — Over the past 80 years, we have exploited the invention of computers to make calculations much faster than our human brains can. From the ENIAC machines of post-WWII predicting ballistic trajectories to the contemporary Google Colab notebooks we now use that process our team’s electrophysiology data in the cloud, […]

Education— Written by Tim Marzullo — Over the past 80 years, we have exploited the invention of computers to make calculations much faster than our human brains can. From the ENIAC machines of post-WWII predicting ballistic trajectories to the contemporary Google Colab notebooks we now use that process our team’s electrophysiology data in the cloud, […] -

EducationEditor’s note: This is Part II. You can read Part I here. Danae Hello, I am Danae Madariaga, a senior at Alberto Blest Gana high school. I have participated in a data collection project with Etienne, Tim, and Derek for three months. Throughout this time, I have learned many things such as the use of […]

EducationEditor’s note: This is Part II. You can read Part I here. Danae Hello, I am Danae Madariaga, a senior at Alberto Blest Gana high school. I have participated in a data collection project with Etienne, Tim, and Derek for three months. Throughout this time, I have learned many things such as the use of […] -

MarketingBackyard Brains has just added another feature to our ever longer list of media appearances! This time, our co-founder and CEO, Dr. Greg Gage, talked for The Gastronauts, Duke University’s monthly seminar and podcast series. This seminar is being organized by researchers passionate about gut-brain matters. But when one invites the driving force behind Backyard […]

MarketingBackyard Brains has just added another feature to our ever longer list of media appearances! This time, our co-founder and CEO, Dr. Greg Gage, talked for The Gastronauts, Duke University’s monthly seminar and podcast series. This seminar is being organized by researchers passionate about gut-brain matters. But when one invites the driving force behind Backyard […]