-

BizAre you part of an organization registered as public charity in the US or Canada? If yes, now is the time to apply for up to $1,500 that you can use towards planning out an outreach for next year’s Brain Awareness Week (March 14 – March 20, 2022)! If awarded, you could use this money […]

BizAre you part of an organization registered as public charity in the US or Canada? If yes, now is the time to apply for up to $1,500 that you can use towards planning out an outreach for next year’s Brain Awareness Week (March 14 – March 20, 2022)! If awarded, you could use this money […] -

Education— Written by Natalia Díaz — Hello. I’m here again! And this will be my last update on my neuromathematical project. If you don’t remember me, I’m Natalia Díaz, and I’m doing my university internship at Backyard Brains. (If you’re wondering what I’m talking about when I say neuromathematics, check out my first and second […]

Education— Written by Natalia Díaz — Hello. I’m here again! And this will be my last update on my neuromathematical project. If you don’t remember me, I’m Natalia Díaz, and I’m doing my university internship at Backyard Brains. (If you’re wondering what I’m talking about when I say neuromathematics, check out my first and second […] -

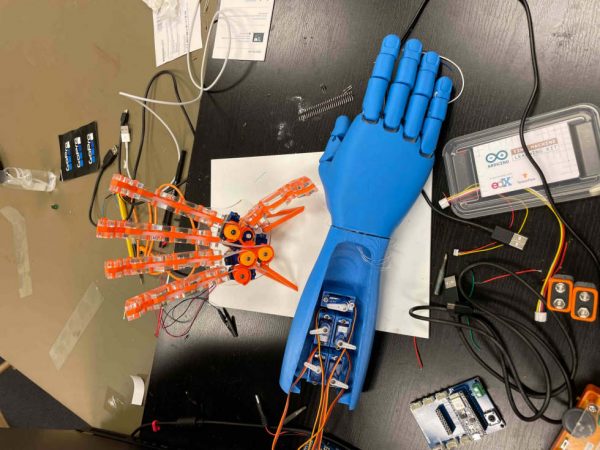

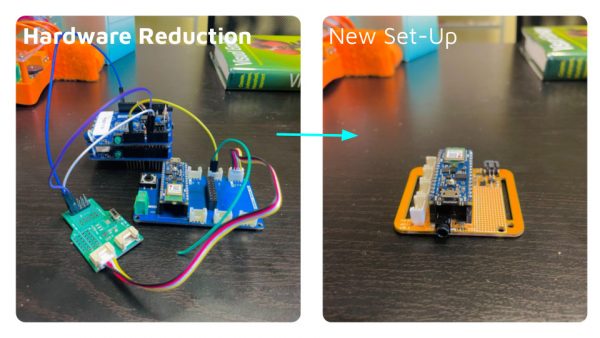

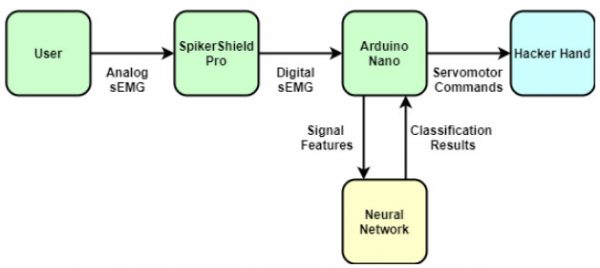

Fellowship— Written by Fredi Mino — Welcome to the final update on my TinyML Robot Hand project! After collecting sEMG (surface electromyography) data, feeding them into a neural network, and producing a machine learning model that can accurately classify different hand gestures, I can proudly say that my eagle has landed! Deploying and integrating the […]

Fellowship— Written by Fredi Mino — Welcome to the final update on my TinyML Robot Hand project! After collecting sEMG (surface electromyography) data, feeding them into a neural network, and producing a machine learning model that can accurately classify different hand gestures, I can proudly say that my eagle has landed! Deploying and integrating the […] -

Fellowship— Written by Sofia Eisenbeiser — Well, folks, we made it. The last week, the final frontier, time to sink or swim. Luckily, I’ve spent the past 10 weeks with the most expert swimmers of all, our BYB fish. And, boy, have they taught me well. So, let’s dive in! Alright, where were we? In […]

Fellowship— Written by Sofia Eisenbeiser — Well, folks, we made it. The last week, the final frontier, time to sink or swim. Luckily, I’ve spent the past 10 weeks with the most expert swimmers of all, our BYB fish. And, boy, have they taught me well. So, let’s dive in! Alright, where were we? In […] -

Fellowship— Written by Nour Chahine — Before I start discussing my updates, I have an announcement to make: I solemnly swear that I will solely be working with SSVEPs for the remainder of my project! Over the past few weeks, I worked on feeding different forms of data into the neural network. I mainly applied […]

Fellowship— Written by Nour Chahine — Before I start discussing my updates, I have an announcement to make: I solemnly swear that I will solely be working with SSVEPs for the remainder of my project! Over the past few weeks, I worked on feeding different forms of data into the neural network. I mainly applied […] -

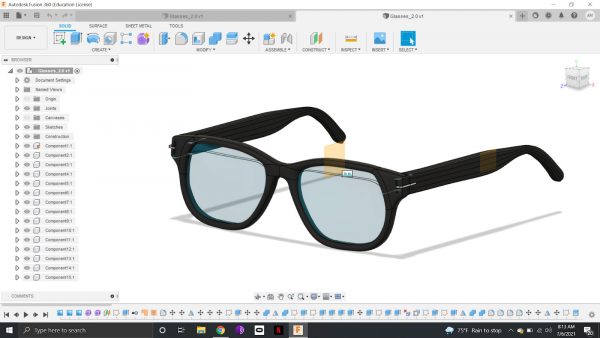

Fellowship— Written by Ariyana Miri — Welcome back FOMO gang! If you’ve been following along with my FOMO glasses journey, then you know I’m trying to build a pair of glasses that capture a photo every time you blink. In my first post, we discussed the idea behind my project, and the implications the success […]

Fellowship— Written by Ariyana Miri — Welcome back FOMO gang! If you’ve been following along with my FOMO glasses journey, then you know I’m trying to build a pair of glasses that capture a photo every time you blink. In my first post, we discussed the idea behind my project, and the implications the success […] -

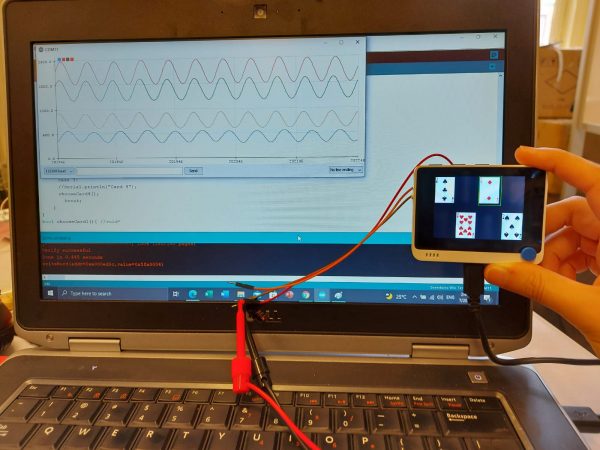

Fellowship— Written by Sachin Pillai — Hello to everyone out there! This is Sachin here again with updates on your very own portable poker bluff detector. I ended my last blog post mentioning how I developed the capability to collect electrodermal activity during full rounds of poker. This has allowed me to amass a large […]

Fellowship— Written by Sachin Pillai — Hello to everyone out there! This is Sachin here again with updates on your very own portable poker bluff detector. I ended my last blog post mentioning how I developed the capability to collect electrodermal activity during full rounds of poker. This has allowed me to amass a large […] -

Fellowship— Written by Samuel Kuhn — So, the summer is coming to an end, and I have faced off against a behemoth of a challenge. The readiness potential has proved elusive at every turn. I have tried different hardware, software, different electrode locations and experimental locations, different participants with different hair lengths, and yes, I […]

Fellowship— Written by Samuel Kuhn — So, the summer is coming to an end, and I have faced off against a behemoth of a challenge. The readiness potential has proved elusive at every turn. I have tried different hardware, software, different electrode locations and experimental locations, different participants with different hair lengths, and yes, I […] -

Fellowship—Written by Sarah Falkovic— Welcome to my final post on project lie detector! Previously, I have discussed how I have moved from strict skin galvanic response research to P300 signals. Here I am now to update you on this “I saw it” response! As a quick recap: the P300 signal is an EEG (brain-wave) measurement […]

Fellowship—Written by Sarah Falkovic— Welcome to my final post on project lie detector! Previously, I have discussed how I have moved from strict skin galvanic response research to P300 signals. Here I am now to update you on this “I saw it” response! As a quick recap: the P300 signal is an EEG (brain-wave) measurement […] -

Fellowship— Written by Fredi Mino — Hello again! Since the last time we met, I have been working towards producing a machine learning model that can accurately predict the different gestures/finger movements that we are classifying, and (spoiler alert) it seems like we are almost there! If we are to have any chance of success, […]

Fellowship— Written by Fredi Mino — Hello again! Since the last time we met, I have been working towards producing a machine learning model that can accurately predict the different gestures/finger movements that we are classifying, and (spoiler alert) it seems like we are almost there! If we are to have any chance of success, […] -

Fellowship—Written by Ariyana Miri— Welcome back! If you’ve been following along with my FOMO glasses journey, then you know I’m trying to build a pair of glasses that capture a photo every time you blink. In my last post, we discussed the idea behind my project, and the implications the success of the project can […]

Fellowship—Written by Ariyana Miri— Welcome back! If you’ve been following along with my FOMO glasses journey, then you know I’m trying to build a pair of glasses that capture a photo every time you blink. In my last post, we discussed the idea behind my project, and the implications the success of the project can […] -

Fellowship—Written by Sofia Eisenbeiser— Please give a warm welcome to the newest additions to Backyard Brains: the tiger barbs! Hello, everyone! It’s Sofia, coming at you with an update on the BYB fish. So, we last left off with making the discovery that one of our African cichlid fish likes to chase a laser pointer around […]

Fellowship—Written by Sofia Eisenbeiser— Please give a warm welcome to the newest additions to Backyard Brains: the tiger barbs! Hello, everyone! It’s Sofia, coming at you with an update on the BYB fish. So, we last left off with making the discovery that one of our African cichlid fish likes to chase a laser pointer around […]